Context is key to surpass AI’s 40% success: Gartner D&A 2026

Earlier this month, I joined thousands of data leaders in Orlando for the Gartner Data & Analytics Summit. The halls were buzzing with the promise of autonomous agentic workflows. But as I reflect on the sessions and conversations on the floor, I keep returning to two pillars: context and governance.

These are prerequisites for true agentic workflows, where agents make decisions and act in real-time.

- Context bridges the gap between your data and your business logic. It enables your agents to make decisions you can trust.

Context helps agents make better decisions. For this we need knowledge engineering to extract tribal knowledge, decision graphs to map exceptions, context graphs to connect the dots via relationships, clean metadata and a rich semantic layer. - Governance ensures agents operate within safe boundaries, preventing "really bad" decisions that could expose an organization to regulatory or legal risk.

We need both data and AI governance to ensure agents cannot use or do things they shouldn’t. Data governance secures the data across the lifecycle. Machine access controls prevent agents from accessing what they shouldn’t and user-based permissioning applies that to humans. Combining these with AI guardrails helps ensure safe, ethical and reliable agent operations.

Without context, your agents can make the wrong decisions confidently. Without proper governance, they can act on data they shouldn’t even be able to access. Without the strong foundation of both, your agents will remain an expensive experiment that never graduates to production. Read on for a full recap of Gartner D&A Summit 2026.

The ROI gap

Gartner shared a sobering data point: While four out of five organizations are deploying AI, only one out of five is seeing a return on investment. This gap between 80% of organizations investing in AI and only 20% seeing ROI no doubt contributes to the fact that most agentic projects fail. It’s hard to invest when you don’t see a return.

Interestingly, while 67% of data leaders are concerned about uncertain costs, fewer than half actually monitor AI for FinOps. This leads to a critical question: Is the ROI gap based on measurable results or just perceived value? Regardless, the executive conversation has shifted from "What can we use AI for?" to "What should we use AI for?"

The answer to that depends on the reliability of the underlying data. To move a project from pilot to production, organizations must first address a massive confidence deficit in their data foundations. Today, only 14% of data leaders feel confident that their data is properly governed and secured for AI. This lack of trust is likely why Gartner predicts 60% of AI projects will fail by 2028 due to the lack of a consistent semantic layer.

Adding context to semantic layers

Gartner’s failure forecast for AI should give leaders pause. A 60% failure rate is expected for agentic projects without a consistent semantic layer. Disconnected data and poor context are expected to prevent reliable, scalable performance. Gartner’s Andres Garcia-Rodeja said it best:

Semantics, knowledge graphs and ontologies are no longer niche technologies... but essential components of AI-ready data infrastructure.

Here are three emerging trends:

1. The shift to deterministic semantics

We are seeing a major shift in how we provide agents with the data they need to do a task. Let’s take SQL queries as an example. Using an LLM to generate SQL directly from natural language prompts is the least accurate approach. Accuracy improves when we provide a semantic graph for the LLM to traverse. The most accurate and reliable results come from deterministic semantics, where LLMs translate prompts into queries of a semantic view rather than raw data.

This transition seems to be well underway. Gartner reports that 44% of organizations have implemented a semantic layer in 2025 and another 48% plan to by 2027.

2. The unified context layer

Silos are the silent killers of agentic projects. Data context is often scattered across multiple disconnected systems (ingestion, transformation, orchestration, compute, etc.). The lack of standards forces teams to stitch together metadata from disparate sources for each AI agent.

Leading platforms, like Databricks and Snowflake, are bringing context into their ecosystems. But, they cannot see beyond their walled gardens. What’s missing is a context layer that aggregates metadata from across systems and builds the knowledge graphs that agents need to make decisions we can trust.

3. No action without governance

We are moving toward a perceptive state where models adapt to real-time inputs and take autonomous actions. In this new world, we are no longer building static dashboards for humans. We’re enabling autonomous agents.

For this to work, we must secure the data and the agent. We need to ensure that sensitive data never reaches the models and that both human and agent users only access what they need for their function.

These three trends can help organizations get their AI prototypes into production. They require an investment in the data foundation to build trust in the products built on top of it. This is precisely what we have done at Capital One, where models run against data products and ontologists are on staff.

The Capital One blueprint: Data management as infrastructure

At Capital One, data management is considered the high-leverage infrastructure that everything runs on. The fireside chat between Gartner’s Rita Sallam and Amy Lenander, CDO of Capital One, provides a practical blueprint for organizations to follow.

- The rise of data products: Capital One realized a long time ago that for data to be useful across the organization it needs to be packaged as a product. It needs to be curated, verified, governed and documented so that other business units can find, understand and use the data effectively.

- The data product manager: As data products multiply, data stewards will transform into data product managers. Data product managers are focused on making data valuable for the business, bridging the gap between the data producer and the data consumer.

- Data products vs data lakes: For AI agents to be reliable, the authoritative source cannot be a raw data lake. It must be a curated, defined and governed data product. And when agents run against data products, improving metadata becomes a prerequisite. It is no longer a chore no one wants, but the basis for success.

- Risk management as an enabler: Rather than a bottleneck, risk management and data security should be treated as enablers. Capital One adopts an intent-driven data strategy, to align governance with business value. The focus is on how data can be used responsibly, rather than simply denying access by default to maintain zero trust.

- Ontologists on staff: Scaling AI reliably requires intentional curation. We view ontologies as essential for building AI responsibly and have a dedicated team of ontologists.

Building on this vision, Jeff Chou, VP of AI & ML at Capital One, provides a technical deep dive into context layers and how they can bridge the gap between successful experiments and value-generating agents.

Engineering the context layer

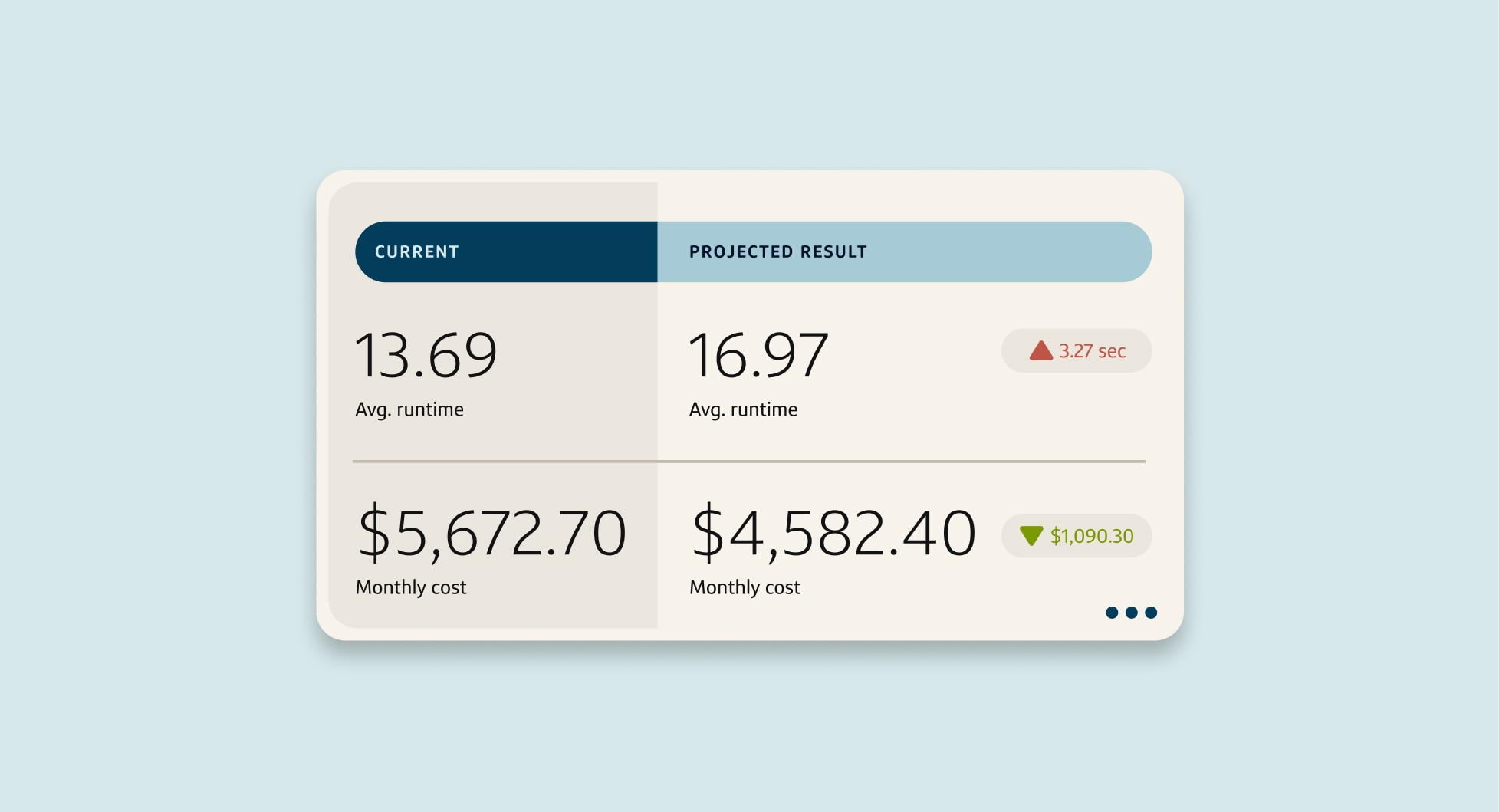

While many organizations are struggling to prove ROI from AI projects, Capital One Software’s internal research and testing have demonstrated that providing agents with context is the key to unlocking both efficiency and security at scale.

Reliable AI agents need more than just access to your data. They need a unified context layer to make sense of your data. They need a curated, governed environment with:

- Entities and activities: Clear mapping of business objects, like "Customer" or "Transaction."

- Environmental conditions: Real-time data on system health, pipeline performance and governing policies.

- Deterministic logic: SQL query generation based on semantic definitions, historical performance and projected downstream implications.

Capital One Software has been testing a few use-cases for context-aware AI internally and while it’s still early days, results have been promising:

- SQL queries written using deterministic semantics show a 20% performance improvement on average.

- Context-aware tokenization has been found to protect sensitive data at scale (up to 4M tokens per sec) without sacrificing usability. It also results in significantly higher model accuracy than data masking.

The governance paradox: Intent-driven security as an enabler

Context is one prerequisite for agentic project success, governance is the other. At Capital One, risk management and data security are seen as enablers, rather than gates.

Ron Ayers, Sr. Product Manager of Capital One Databolt, argues that by adopting an intent-driven data strategy organizations can align security, governance, and regulatory requirements with business value. Intent-driven data security centers on building a data foundation that supports current and future use cases, rather than layering on security as an afterthought. Instead of blocking access, this strategy focuses on how data can be used responsibly to maximize its value.

Capital One’s own journey with Databolt is evidence that intent-driven data security can act as an enabler for AI projects, by providing the guardrails necessary for experimentation.

Conclusion

If there was one turning point at Gartner Data & Analytics Summit 2026, it was the realization that knowledge engineering is the new MVP of the data team. Semantic layers, knowledge graphs and ontologies are no longer niche technologies, they are essential components of an AI-ready data infrastructure.

The quicker an organization can get their data ready for AI securely and set up the metadata and context foundation, the faster they can unlock the true value of agentic AI. Autonomous agents adapting to inputs and taking action in real-time.

If you’re at your desk and wondering where to start, here’s a plan:

- Audit your context: Where does it live? What is actively maintained and machine-readable? What lives in people’s heads?

- Evaluate your team: Do you have the skillsets you need? Do you have ontologists to map the system and data product managers to bridge the gap between data producer and consumer?

- Modernize your governance: Shift to an intent-driven data strategy. Define the data governance guidelines, AI policies, guardrails and machine access controls early, so that risk management becomes an enabler of automation rather than a bottleneck.

- Build the context layer: Start small and prove value. Select a high-impact use case and build the ontology and knowledge graph for that pilot domain. Compare AI accuracy and performance and use that data to get buy-in across the organization.

- Consolidate metadata: Combat fragmentation by moving toward a unified context layer that stores all of your metadata from across sources and uses that information to build the knowledge graphs agents need to be autonomous.

The future is agentic, but only if we build the foundation agents need to be accurate and reliable. At Capital One Software, we are committed to helping organizations build this foundation through our data management solutions. Interested in learning more? Schedule an exploratory conversation with the team.